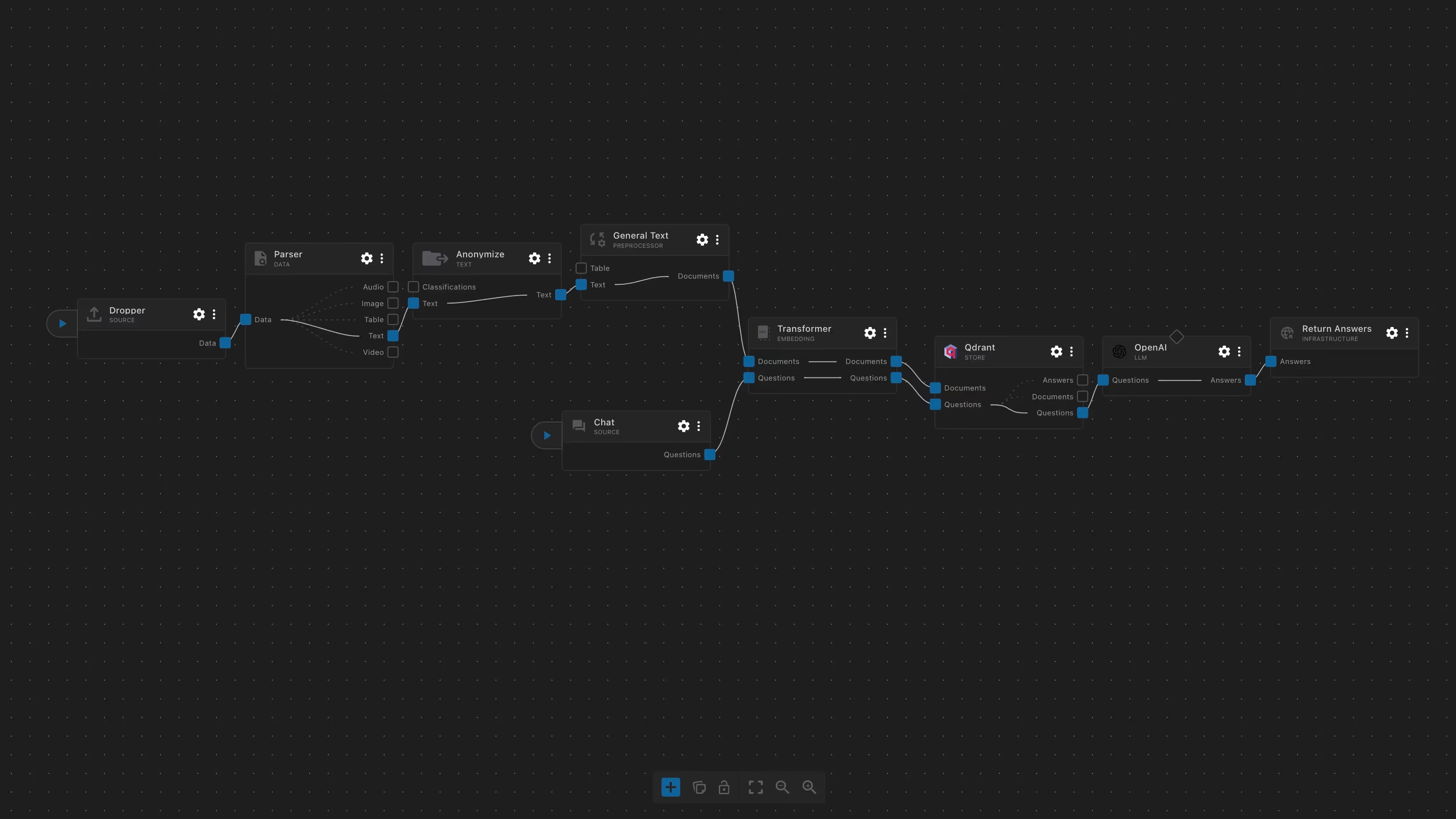

Real-Time Evaluation Pipeline

LLM Benchmark Comparison Pipeline

Description: A real-time evaluation pipeline that routes a single benchmark-style question simultaneously to four leading large language models — Claude Sonnet 4.6 (Anthropic), GPT-5.2 (OpenAI), Gemini 3 Pro (Google), and Grok 3 (xAI).

Each model receives an identical deterministic prompt instruction to ensure a level playing field, and their responses are returned in a single structured JSON payload keyed by provider.

Designed for human-in-the-loop evaluation, the pipeline pairs with a lightweight frontend where an evaluator can input a question, record an expected answer, and compare all four model responses side by side — making it ideal for factual accuracy benchmarking, knowledge cutoff testing, and identifying model-specific failure modes.

Close